No reference video quality assessment

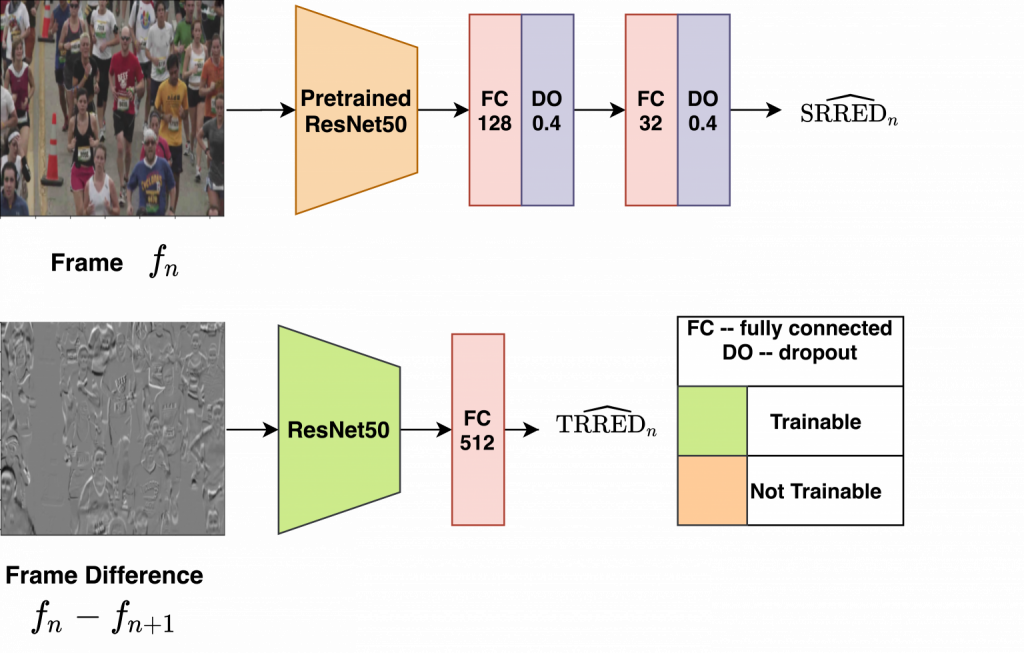

We consider the problem of robust no reference (NR) video quality assessment (VQA) where the algorithms need to have good generalization performance when they are trained and tested on different datasets. We specifically address this question in the context of predicting video quality for compression and transmission applications. Motivated by the success of the spatio-temporal entropic differences video quality predictor in this context, we design a framework using convolutional neural networks to predict spatial and temporal entropic differences without the need for a reference or human opinion score. This approach enables our model to capture both spatial and temporal distortions effectively and allows for robust generalization. We evaluate our algorithms on a variety of datasets and show superior cross database performance when compared to state of the art NR VQA algorithms.

References:

S. Mitra, R. Soundararajan and S. S. Channappayya, “Predicting Spatio-Temporal Entropic Differences for Robust No Reference Video Quality Assessment,” in IEEE Signal Processing Letters, vol. 28, pp. 170-174, 2021,

Faculty: Rajiv Soundararajan, ECE